What I Didn't See at the Small Business Expo: Real AI Implementation

The Small Business Expo at Javits Center revealed a troubling gap: AI buzzwords everywhere, substantive implementation nowhere. Most vendors confused marketing automation with actual AI systems.

The Small Business Expo at Javits Center this week promised AI everywhere. Booth after booth claimed AI-powered solutions. What I actually found was a masterclass in marketing theater.

"AI-powered audit and controls" turned out to be Excel automations. "Your book done in 90 days with AI" meant glorified templates. Tallywise pitched AI accounting. SmallBizAI.com asked "Can AI find your small business?" An "AI Caller" promised automated outreach. NClouds offered "From Idea to AI-Enabled Small Business."

The pattern was consistent. Vendors slapped AI labels on existing software without changing the underlying systems. They treated AI as a chatbot add-on, not as something embedded into actual workflows.

Almost nobody could articulate the difference between an LLM and a deterministic system. Nobody discussed what AI is bad at. Edge AI was absent from conversations. The substance wasn't there.

70% of vendors mentioned AI in their pitch. Less than 10% could explain their implementation beyond "we use ChatGPT."

This reveals two problems brewing in the small business market.

The Bubble Risk

Small businesses are confusing "I made a deck with AI" with "I built an AI-powered business." These are fundamentally different things. One is using a tool to create marketing materials. The other is systematically automating core business processes.

Everyone at the expo was using ChatGPT or Claude to make slick presentations and websites. The visual output looked professional. But underneath, the business logic remained unchanged. They automated the cosmetics, not the operations.

This creates dangerous expectations. Small business owners see polished AI-generated marketing and assume the business model has evolved too. It hasn't. They're buying software that looks modern but operates like legacy systems.

The disconnect will catch up. When AI-marketed solutions perform like traditional software, trust erodes. When businesses realize they paid premium prices for standard functionality with chatbot features, they'll pull back from legitimate AI implementations.

The Implementation Reality

At NxtConnect, we've automated roughly 55 developers' worth of work with 15 AI agents. The lesson from that implementation contradicts everything the expo was selling.

Real AI implementation didn't reduce our need for humans. It raised the bar. We need more senior critical thinkers, more people with mathematical skills, more people who understand the software lifecycle end-to-end.

AI agents need supervision. Generated code needs someone who can actually read it. Automated workflows require human oversight at decision points. The technology amplifies human capability, but only when humans understand what they're amplifying.

The expo vendors were selling the opposite story. They promised AI would eliminate complexity, reduce skill requirements, automate everything. That's not how production AI systems work.

Effective AI requires deeper technical understanding, not less. You need to know when the system will fail, where human intervention is required, how to validate automated outputs. The skill bar goes up, not down.

What Was Missing

Three critical elements were absent from expo conversations.

First, nobody discussed failure modes. Real AI implementations fail in predictable ways. LLMs hallucinate. Models drift over time. Edge cases break automation. Production systems need fallback procedures.

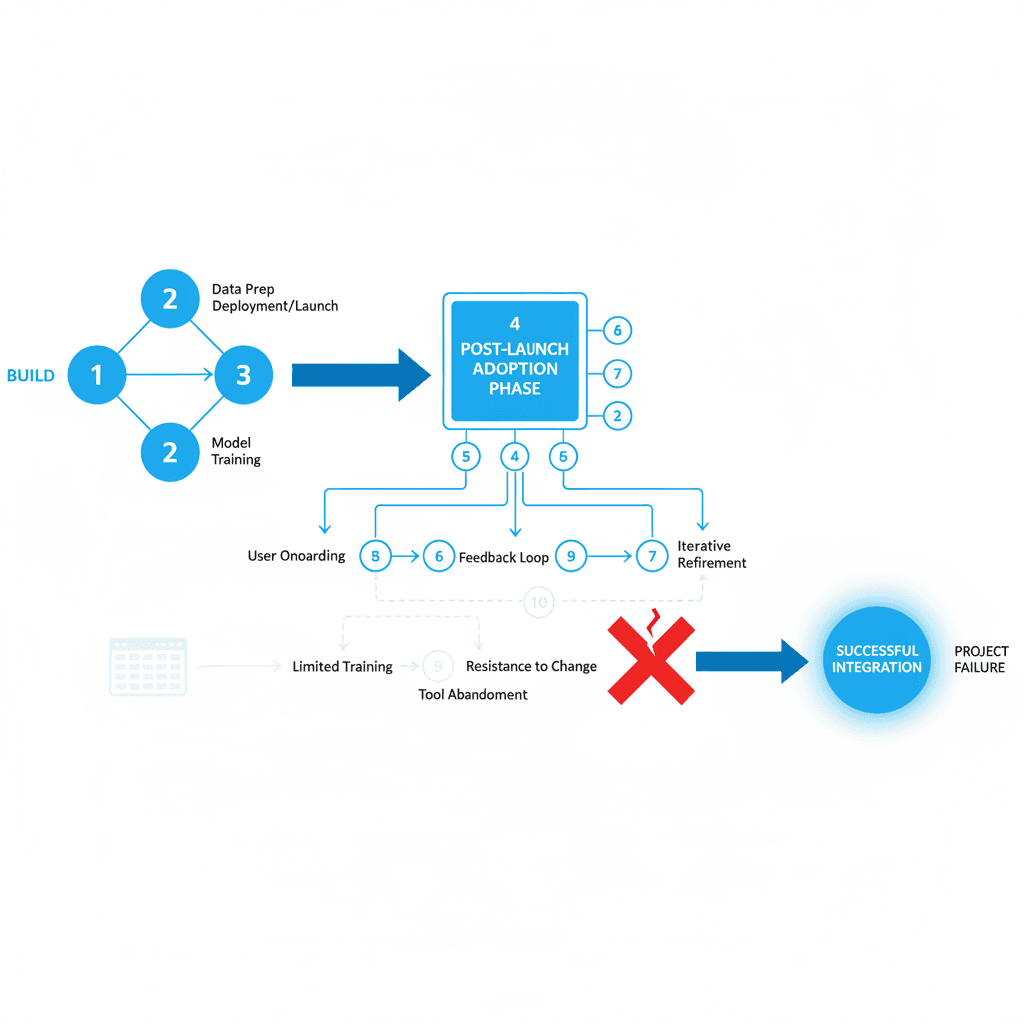

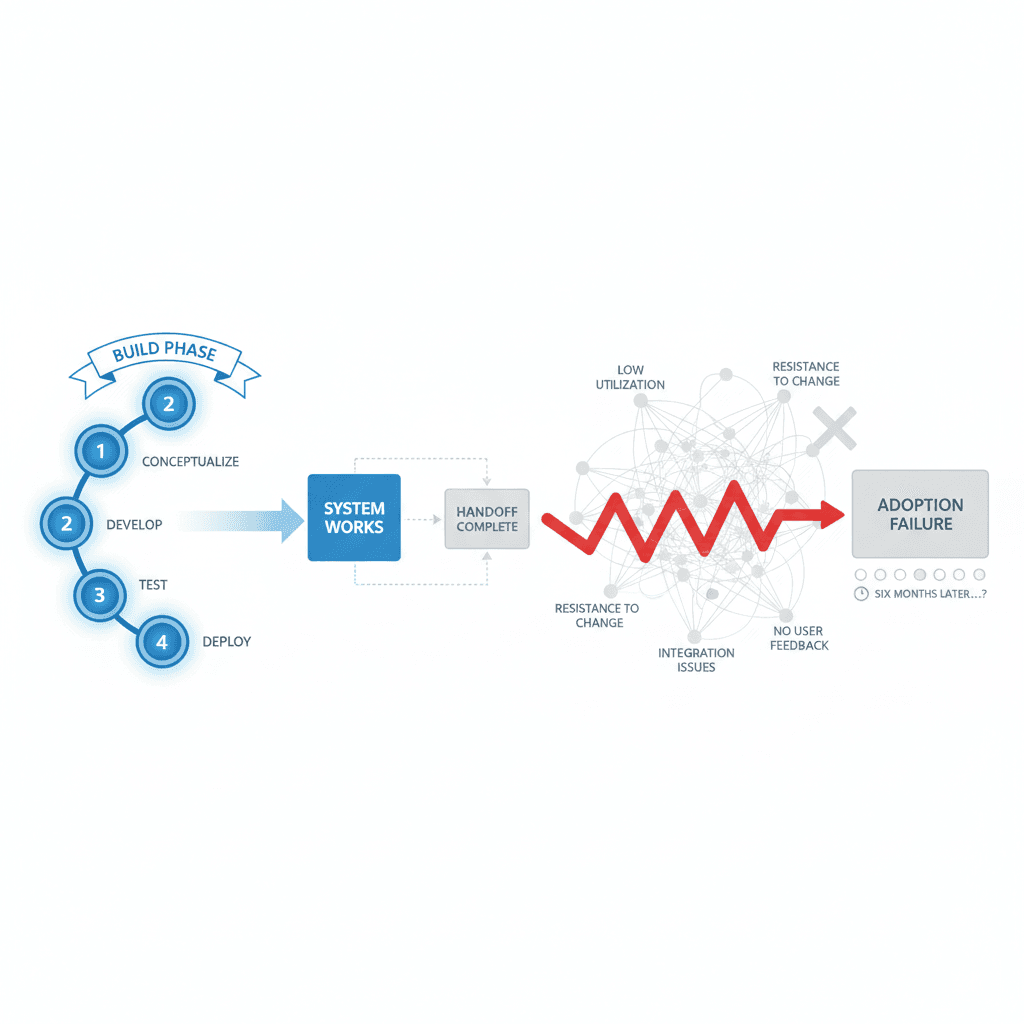

Second, nobody addressed integration complexity. AI doesn't exist in isolation. It needs to connect with existing databases, APIs, user interfaces. The integration layer is where most AI projects fail.

Third, nobody talked about measurement. How do you know if your AI implementation is working? What metrics matter? When do you intervene? The expo vendors treated AI like a magic box that produces better results without measurement frameworks.

The Real Work

The gap between expo promises and implementation reality represents opportunity. Small businesses need AI solutions that actually work, not marketing theater that looks impressive.

That means building systems that integrate with existing workflows. It means creating measurement frameworks that show real business impact. It means designing fallback procedures for when automation fails.

The businesses that figure this out first will have significant advantages. They'll automate real work while competitors automate presentations. They'll build sustainable efficiency gains while others chase AI-washing trends.

The expo was a snapshot of where the market still is: AI as a marketing layer, not as operational craft. We're building websites that look smart without understanding the business needs underneath.

That's the gap. That's also the work.

Key Questions

Q: How can small businesses distinguish between real AI implementation and AI marketing?

A: Ask vendors to explain their failure modes, integration requirements, and measurement frameworks. Real AI implementations have documented answers for all three.

Q: What should small businesses prioritize when evaluating AI solutions?

A: Focus on workflow integration over feature lists. AI should embed into existing processes, not create new complexity layers.

Q: Why do most AI implementations fail in small business environments?

A: Lack of integration planning, unrealistic expectations about automation scope, and insufficient human oversight for AI-generated outputs.

Q: How do you measure AI implementation success?

A: Track time saved on specific tasks, error reduction rates, and human intervention frequency. Avoid vanity metrics like "AI-powered features deployed."

Q: What skills do small businesses need to implement AI successfully?

A: Technical literacy to understand system limitations, process mapping to identify automation opportunities, and measurement capability to track implementation results.

About the author

Related

Keep reading

Want more like this?

Subscribe to Field Notes — weekly observations from inside real AI engagements. Free.