Why Most AI Projects Succeed at Build and Fail at Adoption

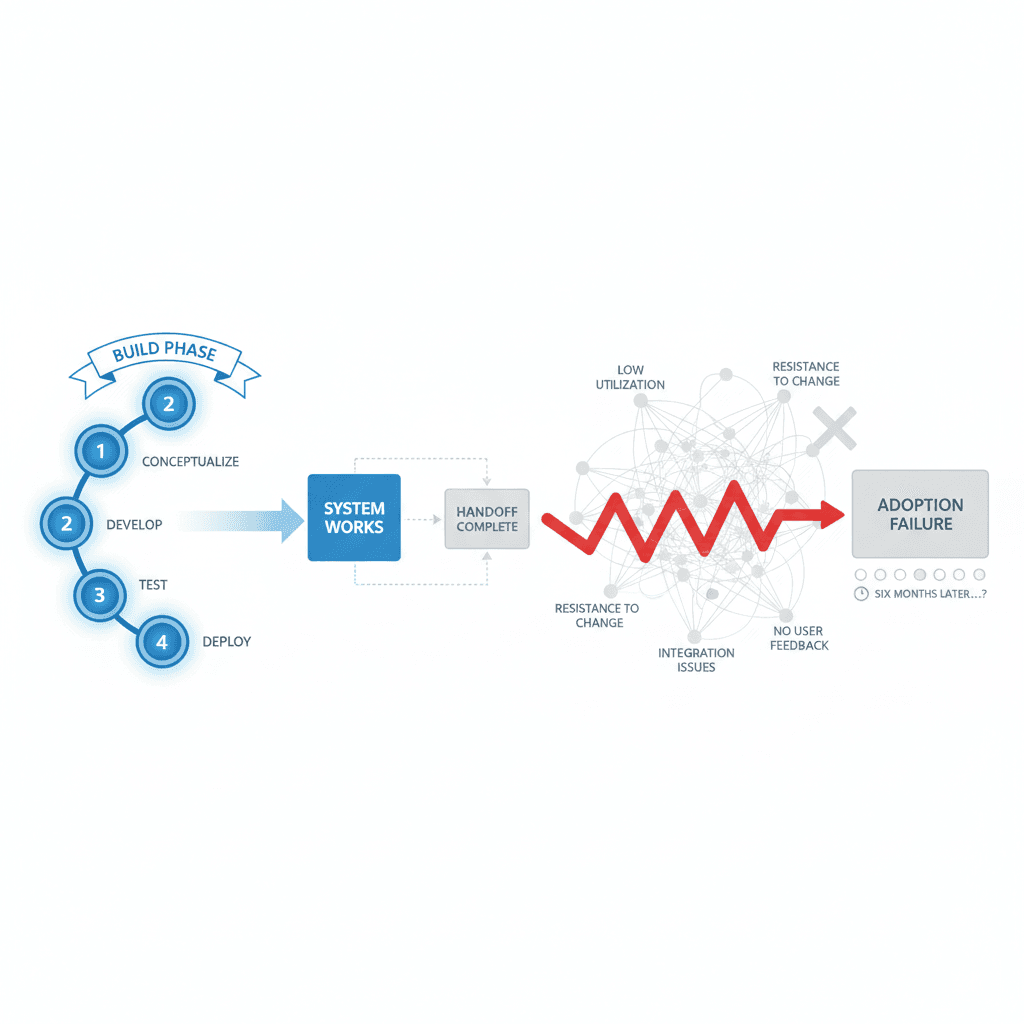

We see this pattern across every industry we work in — market research, law, real estate, consulting. The build phase is complete. The system works. The handoff slides look good. Six months later, half the team has switched back to Excel.

We see this pattern across every industry we work in — market research, law, real estate, consulting. The build phase is complete. The system works. The handoff slides look good.

Six months later, half the team has switched back to Excel.

Most AI agencies don't price this phase into their engagements. They deliver and leave. We don't — and the reason is experience, not marketing.

The Technical vs Cultural Split

An AI implementation doesn't fundamentally differ from introducing other enterprise technology. It needs technical implementation — yes. But it needs just as much work on cultural adoption, team buy-in, and willingness to actually use the tool daily.

Adoption isn't a two-minute task. It needs time and resources like any serious change project.

Technical implementation makes up about 30 percent of the work. Cultural adoption makes up the other 70 percent.

What we learned from engagements at SIS International, at the boutique music law firm, at the New York real estate company — three different industries, same pattern:

The teams that succeed with AI treat AI as a change management problem, not just a software installation problem. They adapt, learn, experiment — and treat the first version as the beginning of work, not the end.

Why Build Phases Look So Good

Build phases have clear success metrics. The model processes data. The API returns responses. The interface renders correctly. You can demo the system. The client signs off.

This is measurable, deliverable, bileable work. Most agencies stop here because it's the clean exit point. The technical requirements are met. The contract is fulfilled.

But software that works isn't software that gets used. We learned this lesson across industries:

The market research firm needed their analysts to trust AI-generated insights enough to present them to Fortune 500 clients. Trust doesn't ship with the code.

The law firm needed partners to feel confident using AI for contract review. Confidence requires practice, iteration, and gradual exposure.

The real estate company needed agents to incorporate AI recommendations into their existing client workflows. Integration requires understanding how people actually work, not how they say they work.

The Real Work Starts After Launch

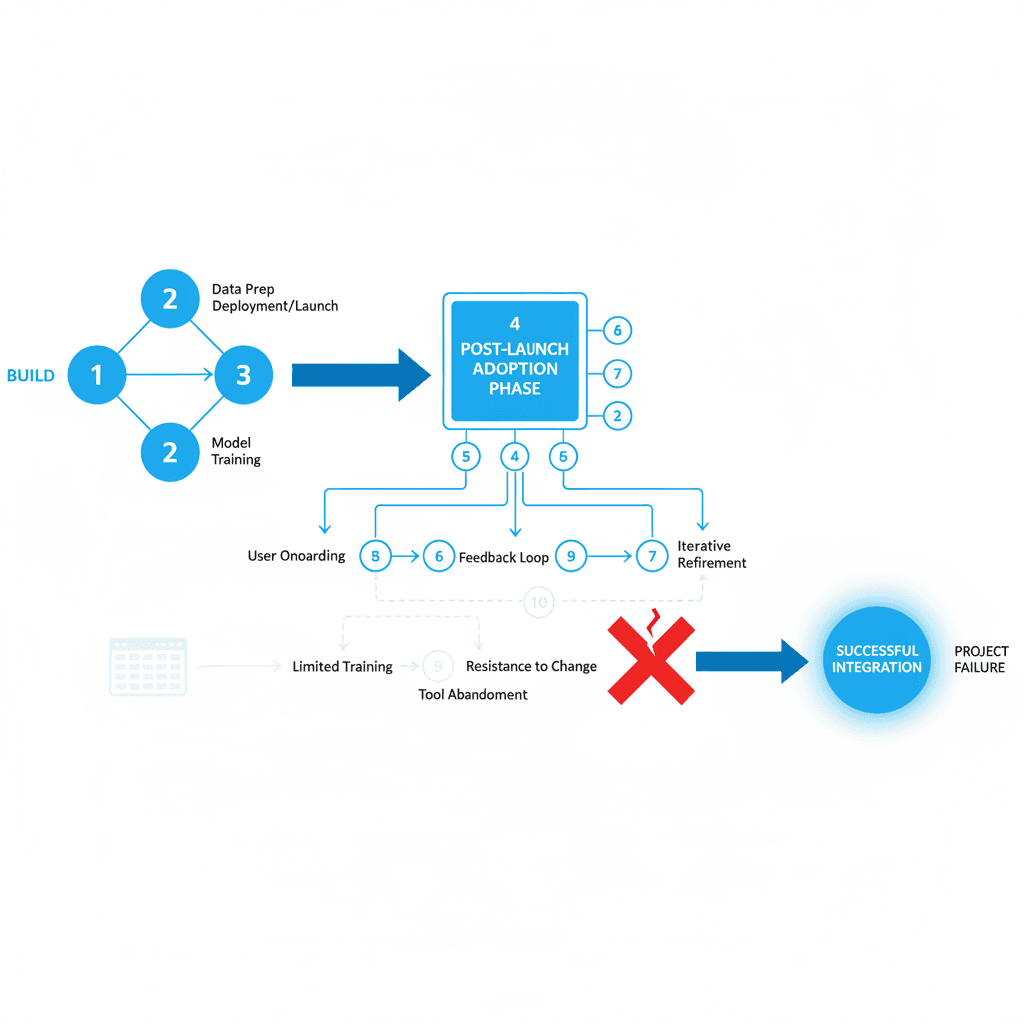

Embed — the fourth phase of our engagement model after Audit, Build, Automate — is the part that makes the rest valuable. Team training that sticks. Documentation that actually gets read. Adoption metrics that matter. Iterative refinement based on real usage.

We don't disappear after launch. Month four is where the real project begins.

Here's what Embed looks like in practice:

Week 1-2: Installation and basic training. Teams learn the interface, understand core functions, complete simple tasks.

Week 3-6: Gradual integration. Teams start incorporating AI into daily workflows. We track usage patterns, identify friction points, adjust training materials.

Week 7-12: Optimization and refinement. Based on actual usage data, we modify workflows, update prompts, enhance training, address resistance patterns.

Month 4-6: Full integration. Teams use AI as part of their standard process. We measure impact, document learnings, plan next improvements.

What Agencies Miss About Adoption

Most AI agencies underestimate the human side of implementation. They assume that because the technology works, people will use it. This assumption fails consistently.

People have existing workflows that work. They have tools they trust. They have habits built over years. Introducing AI means asking them to change fundamental work patterns.

Change requires more than training sessions. It requires ongoing support, iteration based on feedback, and patience with the learning curve.

The agencies that only focus on build miss three critical adoption factors:

Trust building: Teams need to see AI work correctly multiple times before they trust it with important tasks.

Workflow integration: AI needs to fit into existing processes, not replace them entirely.

Gradual expansion: Starting with low-risk use cases and expanding based on success builds confidence.

Why We Stay Through Embed

When you're scoping AI for your company and the agency you're talking to only discusses what they'll build — ask them what happens in month four. The answer to that question is the real project.

We stay because we've seen what happens when you don't. The boutique law firm that built a brilliant contract analysis system — then watched it sit unused because partners were nervous about AI recommendations for million-dollar deals.

The market research company that created sophisticated sentiment analysis — then saw analysts default to manual methods because they didn't understand how to incorporate AI insights into client reports.

The real estate firm that implemented intelligent lead scoring — then watched agents ignore the scores because they didn't trust the underlying data quality.

These weren't technical failures. These were adoption failures. The systems worked perfectly. The teams just didn't use them.

Measuring Real Success

Success in AI implementation isn't measured by whether the system works. It's measured by whether people use it consistently, confidently, and effectively.

Our Embed metrics track:

Daily active users across team functions

Task completion rates using AI vs manual methods

Time savings on routine processes

Quality improvements in output

Team confidence scores in using AI for different task types

These metrics take months to stabilize. You can't measure them at launch. You need ongoing engagement to capture real usage patterns.

The companies that succeed with AI treat the first deployment as the beginning of an iterative process. They plan for months of adjustment, learning, and optimization.

The companies that struggle treat deployment as the end of the project. They expect immediate adoption and are surprised when teams revert to familiar tools.

Key Questions

Q: How long should we expect the adoption phase to take?

A: Plan for 3-6 months of active adoption work after technical deployment. Simple tools might achieve full adoption in 6-8 weeks, complex workflows can take 4-6 months.

Q: What's the biggest factor in successful AI adoption?

A: Gradual integration that respects existing workflows. Teams that try to completely replace existing processes see higher resistance than teams that start with AI-assisted versions of current tasks.

Q: How do we know if our team is actually adopting the AI system?

A: Track daily usage rates, task completion through AI vs manual methods, and team confidence surveys. Usage patterns tell you more than satisfaction scores.

Q: Should we hire an agency that focuses only on build or one that includes adoption support?

A: Choose agencies that include post-deployment support. The technical build is the easier part — adoption is where most projects succeed or fail.

Q: What happens if our team resists using the AI system after deployment?

A: Resistance is normal and expected. The key is structured support: additional training, workflow adjustments, and addressing specific concerns rather than pushing harder on adoption.

About the author

Related

Keep reading

Want more like this?

Subscribe to Field Notes — weekly observations from inside real AI engagements. Free.